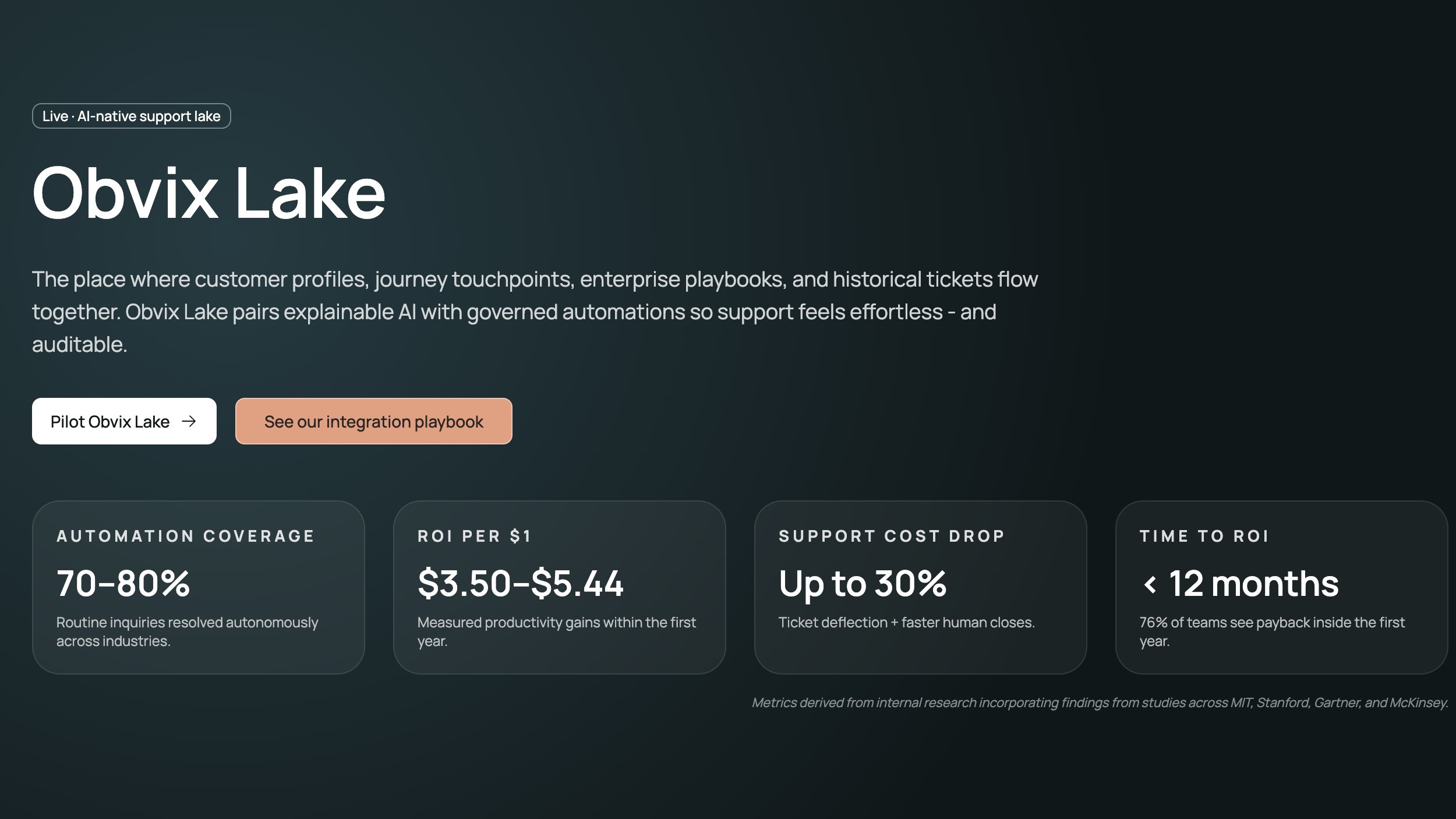

Obvix Lake

Built and integrated a production-ready AI support orchestration console, making complex backend workflows usable through a clean, operator-first frontend.

At Obvix Lake, I led frontend console development with React + TypeScript and tightly integrated it with Flask APIs to support real support-team workflows. I delivered responsive modules for dashboard metrics, chat operations, ticket orchestration, review queues, KB workflows, and trend analytics with clear hierarchy and fast operator navigation.

Project At A Glance

Timeline

Dec 2025

Industry

AI · Enterprise · SaaS

Contribution

UI/UX design, frontend console architecture, backend integration, analytics surfaces, and smooth support workflows

Collaboration

Project Visual

Obvix Lake

Summary

What this project demanded.

At Obvix Lake, I led frontend console development with React + TypeScript and tightly integrated it with Flask APIs to support real support-team workflows. I delivered responsive modules for dashboard metrics, chat operations, ticket orchestration, review queues, KB workflows, and trend analytics with clear hierarchy and fast operator navigation.

Role

Frontend Developer

Year

2025

Problem Space

The core challenge was exposing hybrid RAG orchestration logic in a way agents could trust instantly: confidence cues, citations, escalation outcomes, persona behavior, and ticket context all had to be visible without clutter or state confusion.

Operators needed to move across chat, ticket state, review queues, knowledge management, and metrics while understanding why the AI responded a certain way. The frontend had to surface confidence, citations, knowledge status, and escalation decisions with clarity instead of dashboard noise.

Capability 01

React

Capability 02

TypeScript

Capability 03

Flask

Capability 04

Hybrid RAG

Product Lens

Obvix Lake had to feel like an operating system for AI-assisted support.

The platform spans conversational AI, hybrid semantic + BM25 retrieval, self-RAG validation, GLPI escalation, document ingestion, and analytics. My job was to shape the frontend so operators could understand what the system knew, what it was uncertain about, and what action should happen next.

01

Operator expectations

- See AI answers, citations, and confidence signals without reading a wall of system detail

- Move fast between ticket routing, chat context, and knowledge review tasks

- Understand when the system should assist, validate, or escalate to a human agent

02

Backend realities

- Hybrid RAG combines embeddings and BM25 with multi-stage validation gates

- GLPI workflows require ticket sync, escalation context, and knowledge extraction from resolved tickets

- The console must support analytics, multi-persona support, KB queues, and chat within one coherent interface

Signal

60/40

Hybrid retrieval balance between semantic embeddings and BM25 lexical search

Signal

1 loop

Closed-loop learning from resolved GLPI tickets into the knowledge base

Signal

real-time

Analytics, issue clustering, and support console feedback surfaced in one product

Insight

In support products, trust comes from visibility. Operators need to see why the system responded, not just the response itself.

Core Interaction Shifts

The product decisions that changed how the experience felt.

Shift 01

Operator-Friendly Chat Experience

Mapped orchestration behavior into readable UI states including typing progress, citations/sources, confidence signals, and escalation outcomes.

Shift 02

End-to-End API Integration

Integrated frontend flows with backend endpoints for chat, personas, routing, review actions, analytics, feedback, and health checks with robust error handling.

Shift 03

Scalable State + Component Architecture

Implemented predictable multi-step state flows and reusable typed components so the console remains maintainable as personas, KB sources, and analytics views expand.

Influence & Validation

What changed because of the work.

The orchestration engine became a practical day-to-day product: fast, understandable, and reliable for real operators handling live support workflows.

Complex backend capabilities surfaced clearly without overwhelming the UI

Improved reliability through resilient loading/error handling and edge-case coverage

Reusable typed frontend foundations established for continued feature growth